AI-Driven Development for teams in 2026

AI-driven development and SDLC in 2026

Our CoFounder, Lev Perlman, held a talk and a presentation at Cooper Parry's VentureCEO Accelerator Programme about the future of AI-driven Software Development and SDLC.

This is a very hands-on talk, with concrete suggestions on team structure, workflow, implementation, a useful toolbox, and an overview of must-haves (dependencies to make it happen).

Watch it here:

Transcript:

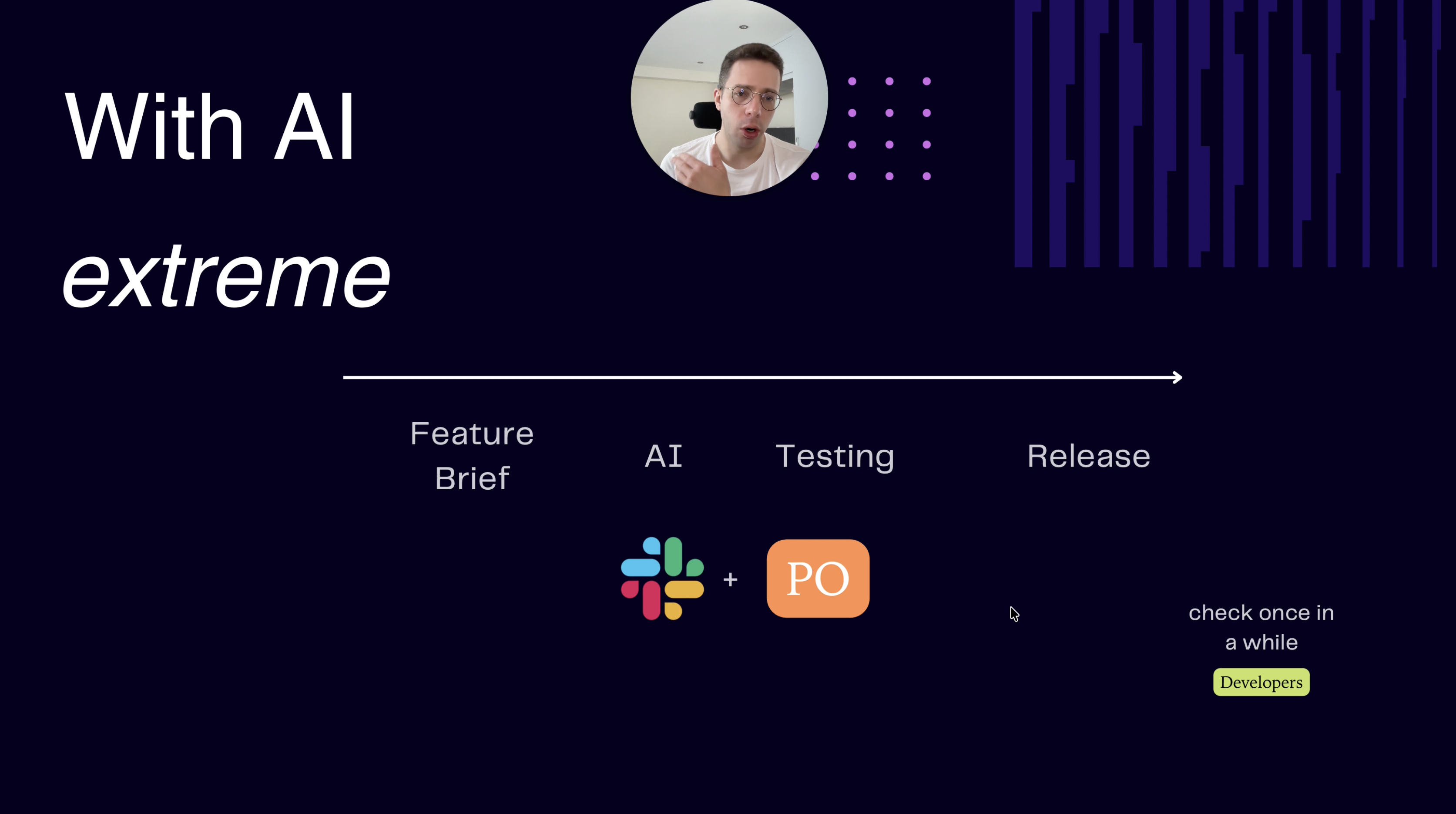

Hi.So I held this talk at the Venture CEO Accelerator Program by Cooper Parry, and I got really good feedback, so I thought I'll share the slides and a quick run through here on LinkedIn.And I got really good feedback, so I thought I'll share the slides here and Hi.I recently ran this talkHi.Hi, I recently did this talk in the VentureHi.I recently did this talk at the Venture CEO Accelerator Program by Cooper Parry, and it was quite good, and I got really good feedback, so I thought I'll share the slides.Fuck.Hi.I recently held this talk at the Venture CEO Accelerator by Cooper Parry, and I got really good feedback, so I thought I'd share the slides and a very quick run through here.Um, let me know what you think.So we're gonna do it very quickly because no one has time, but the full original slides are gonna be posted separately.So AI-driven development in 20 26.Um, before we had what we have today with AI, this was a typical software development lifecycle process.Would have a feature brief done by a PO, uh, design and UX, tickets creations all done by product managers, product owners, delivery managers.Uh, then software development, testing, and release done by the tech team.And this whole thing can take a few days, a few weeks, or a few months, depending on complexity.Uh, but that used to be a typical life cycle with the roles that you can see here, uh, taking responsibility for each step.Now, with AI, we could potentially reach a stage, and many smaller companies and bootstrap founders are already doing that.I've done that a few times for a few products as well.But we could be in a place where we just have 4 steps: uh, feature brief, straight to development, iterate on the platform itself without a design step in the middle, go straight to testing, and release to production.And basically, one person or a few people could wear multiple hats to get this whole thing going.A more extreme example is where you just have one non-technical person in a c-- well, semi-technical person in a company that just uses Slack to code.So for example, you connect Cursor, um, into Slack, and we're gonna talk about the tools, uh, a bit later in the presentation.But you connect Cursor to Slack, a non-technical or semi-technical PO just feeds it with requirements, receives a preview link to review what's been done.If they're happy, push it to production, done.No other steps, no other people needed.It's a bit like vibe coding.It's very dangerous for like serious systems that require access to sensitive data or PII, so do not do this if this is the case.But if you're building a small utility tool, uh, that you yourself would use day to day, and suddenly you see a potential that it can not just improve your own workflow, but also you could maybe make some money on it on the side, and it's nothing, you know, no health data, no PII, but rather a utility, you can do it today already.It will be fine.Maybe check once in a while with the developer to review the code and make sure it's okay.Um, I've-- This is an example of where I've done this basically with a platform that we've built internally, exactly that workflow.Cursor, I'm a technical person, so I could review the code and see if it's good, but even if you're not technical, you can still do this.Um, let's say just be very careful and sometimes have someone review it properly.Now, realistically, if you're a proper company, um, with staff, let's say 20, 30 people today, you cannot switch to a one person doing everything workflow.It's, um, impossible.So let's look at what phase one with AI could look like.Uh, human prompting, AI execution, delivery, let's say doubled, roughly.This is not scientific, but you know, feeling because it does happen like this.So you could still follow the same steps, but by using AI in each and every single step, you could optimize and make it faster, and each person, each discipline will know what they need to do, what AI they need to use to make it better.Great.Easy.Most companies are already there today if they're, you know, not massive old companies.With P 2, um, we could reach a stage, uh, that will be phase 2, where-- and I think that's where we want to get to, where we have, let's say, business stakeholders or product stakeholders, non-technical, and a small lean tech team, and basically they're split in the middle.And the non-technical people, they use coding agents like a whole AI toolbox to create prototypes, feed in, create the tickets, create the briefs, create the design, create everything.Um, they do that in parallel.So let's say you have a business team of, I don't know, 5 people, including the C-levels.Each one of them will know something that they want to do or optimize in the business or run an experiment or build a tool or a feature.They just do that in parallel.It could be on the actual code base, but without production access, of course, no access to production at all.But they could run it in parallel, create a few prototypes, create a few previews, and see how it goes.Maybe present it to a few customers or internal users, get the product market fit for that specific thing.And if it's fine, then hand over the prototype with the spec, with the whole thing that you already have to the dev team just to make it into a production-grade thing.And so then it becomes not necessarily like a linear thing where everything is fed into the pipeline for the developers to work on based on, you know, a feature spec, and they have to do the whole thing.But rather, business runs in parallel many prototypes, many experiments, and build the MVP or the POC of the feature of the product by themselves using AI agents.And then the dev team just takes that again.And then the dev team just takes that in parallel, assesses it, makes it into a production-grade safe code to deploy, reviews it, fixes it, makes sure there are no data leaks, no data bleeding, no security issues, et cetera, test it, and release that.And obviously, they themselves will also use agents to optimize the workflow, do it quicker.We'll talk about that in a sec.So toolbox, um, super important.For POs, many tools, uh, that will really help you day to day.Let's zoom in here for a sec.So, um, PostHog for product analytics, they have a very good AI-based chat to get like insights from the product analytics and users' behavior.Uh, Cursor just as an example, because they have the non-techie kind of agent mode where you feed that requirements and it does that for you, and then even sends you a video with a demo of what's been done, including a preview link if your setup supports it, and it can be connected to your Slack, and you just code through that.Easy.Um, UX Pilot to generate user journeys and designs just from a prompt, and then iterate that in text.Again, makes it super quick to build a whole user journey, user flow, whatever you need to do.Uh, PandasAI, just an example of a piece of software that connects to your database.Only connect that to your non-- without write permissions, only read permissions to your production replica if you need.Then you can chat with your database in natural language and ask it questions and get, you know, answers in pure English without having to write SQL code or anything like that.Super easy, but never connect it with write access.Only read access to your read-only replica of the database.Uh, designers, well, uh, use Paper, Cursor, Figma.It's all standard.Not gonna, uh, focus that too much.Developers have their own stack.Each one chooses what they want.Claude Code, amazing.Cursor, amazing.Everyone's happy.Open source alternatives as well.I will focus on this one, um, Greptyle for AI-based code reviews.It's one of those rare examples where a piece of software actually does what it should do, and it doesn't just agree with the user.So many AI tools will just agree with whatever you tell them, and so it will review your code and it will find something which will maybe be bullshit actually.And you will say, "No, no, no, I don't think it's, it's an issue.I think it's fine."And then it will say, "Okay, you're right.It's fine."So Greptyle does not do that.It will argue with you, and it did help us a few times catch a few things that we missed ourselves when reviewing the code.So it's really, really good.Um, actually helps you spot issues at the code review stage.It does work well.Our whole dev team loves it.So yeah, I would absolutely use that.Uh, finally, automation testing.Well, uh, natural language to write, um, tests, agents to create Playwright automation.Maestro is almost an English, uh, scripted test that you can pre-record or write yourself or with AI very, very well and helps you on the release cycle, um, to make it quick.And it's also a must, I think, in the age of AI to have a very strong set of automated tests, unit tests and automation tests, because otherwise, you know, you'll just be screwed when you try to release something.There's gonna be a massive bottleneck if you have a lot of things done, but you have to test them manually one by one.You will never get stuff to production.So you must have a strong automation set of tests that run and you-- that, that you trust, um, in production.Okay, um, so a few must-haves, uh, for that to work very well.first, your setup of infrastructure must have preview environments or dynamic environments, because without that, you will not be able to test whatever AI agents create in isolation.So you must have a setup, and it's quite easy to achieve with Supabase, um, Firebase or others, where you have a dynamic andWell, maybe not Firebase, but Supabase for sure, where you have a dynamic environment and a dynamic database schema generated per preview, per branch, right?And so then when your AI agents spit out a bunch of branches with a bunch of features by different business people, these can be ta-tested, these can be tested safely in isolation without affecting your other main test data or test database.So very, very important.Preview dynamic environments.That's the first step.Without it, you will not be able to move.Finally, you need to have, for the business people to be independent, they need to have like a living, they need to have like a living, breathingThe business people need to have a living, breathing document with the company knowledge.Not just the business stuff, but like the actual context, the tech stuff, the tech requirements, the architecture, the constraints, what's possible, what we should do, what we shouldn't do.This whole thing is a must.So I would just create a doc, make sure it's regularly updated, and then create a Gem in Gemini or like a GPT in ChatGPT from it so that it contains all the company data, um, knowledge, know-how, and constraints.And then business people will not create stuff that is not technically possible to deliver later to production.So it is a faff to keep that up to date, but there is an incentive for developers to do so because it will save them time and inbound from business, um, of features that actually should never happen or should never go to production because of technical constraints or other business reasons.So yeah, I would say this is it.Um, the other things in the slides were just engagement questions for the audience.I'm not gonna cover them now.Let me know what you think.Any questions or if you need help with AI adoption in your company through the dev team, with the dev team, without the dev team, doesn't really matter.We do the whole thing and we explain and train even those who are a bit resistant or hesitant throughout, throughout your company staff.So feel free to reach out.Free chat.We'll have a coffee and see where we take it from there.Cool.Thank you very much.