AI Compliance Checklist: Transparency and Ethics

- Ethical, Legal, and Compliance Standards in AI | Exclusive Lesson

- Setting Ethical Principles for AI

- Making AI Systems Transparent

- Detecting and Reducing Bias

- Protecting User Data and Privacy

- Adding Human Oversight

- Monitoring and Improving AI Systems

- Getting Stakeholder Input

- Training Teams on Responsible AI

- Conclusion

- FAQs

AI Compliance Checklist: Transparency and Ethics

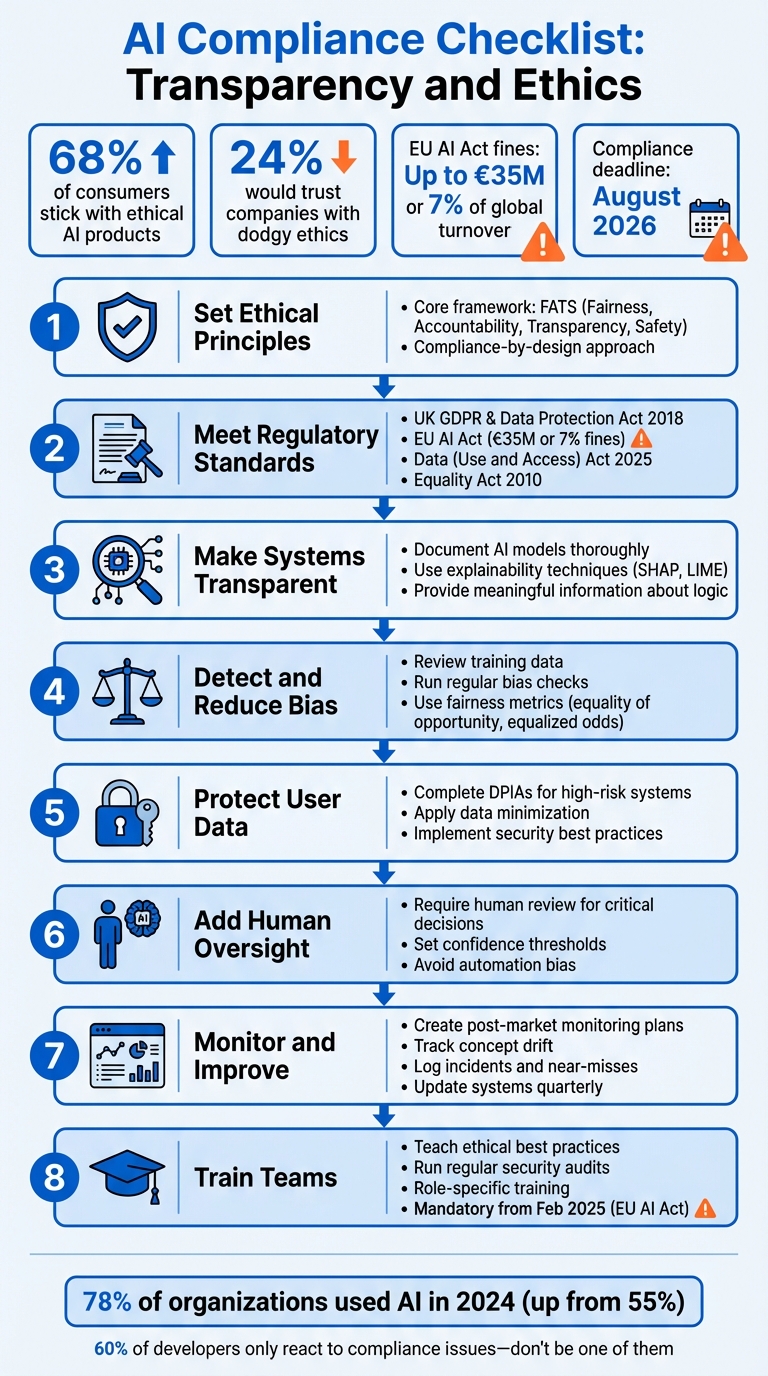

Artificial intelligence is everywhere now - from hiring systems to medical diagnostics. But here’s the kicker: 68% of consumers stick with AI products they see as ethical, while only 24% would trust companies with dodgy ethics. So, if you’re building AI, being transparent and ethical isn’t just a nice-to-have - it’s a must. And regulators aren’t messing about either. The EU AI Act bans risky AI systems like social scoring and adds strict rules for general-purpose AI by August 2026, with massive fines (up to €35 million or 7% of global turnover) for non-compliance. Add GDPR to the mix, and ignoring this stuff could cost you millions.

Here’s the deal: most companies struggle to turn lofty ethical principles into daily practices. That’s where a practical checklist comes in handy. Think of it as baking ethics into your AI from day one. From technical due-diligence including bias audits and explainability techniques to human oversight and privacy protections, this guide covers it all. Let’s break it down into actionable steps so you can stay compliant, build trust, and avoid those hefty fines.

AI Compliance Checklist: 8-Step Framework for Ethical and Transparent AI Systems

Ethical, Legal, and Compliance Standards in AI | Exclusive Lesson

sbb-itb-fe42743

Setting Ethical Principles for AI

Before diving into model development, it’s crucial to establish your organisation's core values. Think of ethics as setting the rules of the game, while governance ensures those rules are followed in every model and decision made day-to-day. A staggering 60% of AI developers admit to only reacting to compliance issues as they arise, which increases legal risks and undermines trust [7]. Clearly, this is not the way forward.

The smarter approach is compliance-by-design - essentially baking legal and ethical requirements into the system from the start, rather than tacking them on as an afterthought [7]. To do this effectively, focus on four key principles: Fairness, Accountability, Transparency, and Safety (FATS) [8][4]. These pillars should reflect your organisation’s values while also meeting the regulatory requirements in your industry. Once you’ve nailed down these principles, the next step is to align them with the specific regulatory standards that apply to your work.

Meeting Regulatory Standards

The UK’s regulatory framework for AI is layered, so understanding the key pieces of legislation is essential. At the core, you’ve got the UK GDPR and the Data Protection Act 2018, which govern any AI systems handling personal data [10][1]. Looking ahead, the Data (Use and Access) Act 2025, coming into effect on 19th June 2025, will introduce updated rules for automated decision-making [8]. If your AI is used in areas like recruitment or credit scoring, the Equality Act 2010 comes into play, prohibiting discrimination based on protected characteristics [1][11].

For organisations exporting to the EU, the EU AI Act is a big deal. Penalties for non-compliance are steep - fines can reach €35 million or 7% of global annual turnover [3]. To ensure compliance, classify your AI systems under the EU AI Act's risk tiers. For example, tools used for recruitment or credit scoring must meet strict requirements such as risk management, robust data governance, and human oversight [3]. Some uses, like social scoring or subliminal manipulation, are outright banned.

Public sector organisations in the UK face additional obligations under the Algorithmic Transparency Recording Standard (ATRS). This standard requires documenting and publishing details about how algorithms influence decision-making processes [1].

Building an Ethical Framework

Before you even think about tackling transparency or bias, you need a solid ethical framework in place. Start by drafting a board-level policy - a concise, two-page document that outlines your organisation’s ethical principles and assigns a senior AI lead to take responsibility [8]. A key component of this framework is purpose limitation. This means clearly defining what an AI system is designed to do and setting boundaries for what it shouldn’t be used for. For instance, if a chatbot is built for customer support, it shouldn’t be quietly repurposed for evaluating employee performance without a fresh ethical review.

Next, maintain a risk register. This is a living document that lists all your AI systems, their intended purposes, potential risks, and the steps you’re taking to mitigate those risks [8]. Aim to update it every quarter. Alongside this, carry out Data Protection Impact Assessments (DPIAs) early in the development process to identify any risks to individuals’ rights and freedoms [9].

Even when you’re using third-party AI solutions, the responsibility doesn’t stop with the vendor. Include clauses in your contracts requiring bias testing, explainability, and audit rights [1][11]. Lastly, ensure meaningful human oversight. This means the human reviewer must have the power to challenge and overturn algorithmic decisions, not just rubber-stamp them [11][9].

Making AI Systems Transparent

Transparency is key when it comes to AI. It’s not just about ticking compliance boxes - it’s about helping people understand how decisions are made. If users can’t see how an AI system works, they’re left in the dark about how their data is being used or how to challenge decisions that affect them. As the Information Commissioner’s Office (ICO) points out, without clarity, people simply can’t grasp how AI systems impact their lives. And let’s face it, a lack of transparency often leads to errors and, worse, harmful outcomes [14][1].

So, how do you make AI systems more transparent? Start by documenting your AI models thoroughly and using explainability techniques to make decisions easier to interpret. These steps aren’t just good practice - they’re necessary to meet UK GDPR rules (Articles 12-15 and 22), which require providing "meaningful information about the logic involved" in automated decisions [14].

Documenting AI Models

Keeping detailed records is like having a roadmap for your AI system. It helps with regulatory audits, troubleshooting, and even internal accountability. Here’s what you should focus on:

- Model selection justification: Why did you choose a particular algorithm? If you went for a "black box" model over a simpler one, explain your reasoning. Consider risks, the potential impact on individuals, and how you’ve worked to minimise bias.

- Data sources and quality: Document where your data comes from, how it’s labelled, and the steps taken to ensure accuracy. Whether it’s traditional datasets or unconventional sources like social media feeds, include metadata to clarify what the data represents.

- Underlying logic: What input features matter most? What parameters drive results? And how do correlations influence the outcomes? Spell it all out.

- Version history: Keep track of every model update - what changed and how those changes affected outputs.

- Audit trail of explanations: Record the explanations you’ve provided to individuals, complete with timestamps, reference numbers, and the exact content shared.

"Compliance-by-design means building AI systems that are responsible by default, not by patch." – VerifyWise [7]

When presenting this information, think layers. Start with the basics - like why a decision was made - and make the nitty-gritty technical details available for those who want them. Translate complex maths into plain English so non-technical folks can follow along. And don’t forget to assign someone in your organisation to oversee explainability and transparency policies. This kind of documentation lays the groundwork for explainability techniques.

Using Explainability Techniques

Not all AI models are equally transparent. Some, like linear regression or decision trees, are naturally easier to understand. These are great for industries like finance or healthcare, where clarity is non-negotiable. Their straightforward logic - like a consistent rate of change - makes them easier to audit and explain [12]. If your system handles sensitive data, the ICO suggests prioritising these simpler models and steering clear of "black box" systems [12].

But what if you’re using a more complex model, like a neural network? That’s where explainability tools come in:

- Local explanations: These focus on specific predictions, showing which inputs influenced an outcome.

- Global explanations: These look at the bigger picture, identifying which features are most important across all predictions.

- Counterfactual tools: These explore "what if" scenarios - like how a higher income might have led to a loan approval [12].

- Sensitivity analysis: This tests how changes in inputs affect outputs.

- Proxy models: These create simplified versions of complex systems to approximate their behaviour.

"The rationale explanation is key to understanding your AI system and helps you comply with parts of the GDPR. It requires looking 'under the hood' and helps you gather information you need for some of the other explanations, such as safety and performance and fairness." – ICO and The Alan Turing Institute [12]

When decisions have a big impact - like in hiring, lending, or healthcare - focus on making the reasoning clear. Start with "Rationale" and "Responsibility" explanations, and leave the more technical details for a secondary layer aimed at experts or auditors [13]. For example, instead of referencing a coefficient, say something like, "Length of employment was the main factor in this credit decision" [13].

If you’re working with opaque models, tools like SHAP (SHapley Additive exPlanations) or LIME can help explain their behaviour [12]. Be sure to document why you chose a specific model, especially if it’s a "black box", and include clear instructions for users on how to challenge an AI-driven decision. Don’t forget to provide a human point of contact for further support [14][4].

Detecting and Reducing Bias

When it comes to building ethical AI systems, spotting and tackling bias is a big deal. It’s not just about doing the right thing - it’s also about avoiding regulatory headaches, reputational damage, or even discriminatory outcomes. Bias in AI generally falls into two buckets: statistical bias (think data errors or measurement issues) and societal bias (like historical inequalities baked into the data) [15]. The trick is to catch these problems early and deal with them head-on.

Reviewing Training Data

Bias often sneaks in through the training data. So, the first step is to ask: is your dataset a fair reflection of the population your AI is meant to serve? It’s not about having mountains of data - it’s about having the right data [15]. You’ll also want to dig into where your data comes from, how it was collected, and whether there are any known flaws [1].

Getting input from stakeholders is key here. They can challenge your assumptions and spot risks of marginalisation that you might have missed [15]. There are a few ways to tackle bias in your data:

- Pre-processing: Clean up the data by removing discriminatory examples.

- In-processing: Adjust the algorithms to account for potential bias.

- Post-processing: Tweak the outputs to improve fairness.

Once you’ve sorted your data, don’t stop there. Set up regular bias checks to keep things fair over time.

Running Regular Bias Checks

Bias detection isn’t a “set it and forget it” kind of job - it’s an ongoing process. Start with pre-deployment checks to catch problems before your AI goes live. Then, keep an eye on things with regular monitoring to spot any performance drifts or fairness issues as they crop up [5]. If you make major updates to your system, that’s a good time to trigger a review [3]. For high-stakes systems, consider doing formal audits, like updating your Data Protection Impact Assessment, at least once a year [3].

"Ongoing evaluation is necessary to maintain responsible AI practices throughout an AI system's lifecycle." – Lumenalta [6]

To make life easier, you can automate fairness and robustness tests right into your CI/CD pipelines [5]. Use fairness metrics like equality of opportunity or equalised odds to see how well your system is performing [15]. And don’t forget to put a post-market monitoring plan in place to track incidents, close calls, or any discriminatory results in real-world use [3]. With enterprise AI adoption jumping from 55% to 78% in just a year [5], keeping up with bias checks has never been more important.

If you’re not sure where to start, companies like Metamindz (https://metamindz.co.uk) can help. They specialise in setting up bias detection frameworks and ensuring your AI systems stay on the right side of compliance.

Protecting User Data and Privacy

When it comes to ethical and compliant AI, safeguarding user data is just as crucial as addressing bias. AI systems handle vast amounts of personal information, which unfortunately makes them prime targets for cyberattacks. The Information Commissioner's Office (ICO) points out that "AI systems introduce new kinds of complexity not found in more traditional IT systems... Since AI systems operate as part of a larger chain of software components, data flows, organisational workflows and business processes, you should take a holistic approach to security." [16]

AI models are not invincible. They can fall prey to attacks like model inversion, where attackers can reconstruct sensitive training data, or membership inference, which reveals whether someone’s data was part of the training set. On top of that, vulnerabilities in machine learning libraries could allow remote code execution or data reconstruction. [16] Let’s dive into the regulatory measures and technical steps you can take to keep personal data secure.

Following GDPR Requirements

If you're operating in the UK or Europe, GDPR compliance isn’t optional - it’s the law. For AI systems that pose a high risk, such as those conducting systematic evaluations, processing large amounts of sensitive data, or monitoring public spaces, you’ll need to complete a Data Protection Impact Assessment (DPIA) before you even begin processing any personal data. [1][3]

Another cornerstone of GDPR is the principle of data minimisation. The ICO clarifies that this doesn’t mean "process no personal data" or "processing more automatically breaks the law." Instead, it’s all about processing only the data you genuinely need for your purpose. [16] To meet this requirement, you can use techniques like de-identification, Privacy Enhancing Technologies (PETs), and, of course, data minimisation itself. Also, don’t forget to tidy up after yourself - delete any temporary or compressed files created during data transfers as soon as they’re no longer needed.

Applying Data Security Best Practices

Legal compliance is a good start, but technical measures are what keep your data safe day-to-day. Start by documenting your data flows. This means tracking where your data comes from, how it’s processed, and where it’s stored. This helps you pinpoint weak spots where leaks could occur. [17] Limit access to sensitive data to only those who absolutely need it and maintain detailed audit logs to track who’s doing what with the data.

Another key tip: don’t let your models overfit. Overfitting happens when a model memorises specific examples rather than learning general patterns, making it more likely to expose sensitive information. [16] To guard against this, implement rate-limiting on your APIs to block unusual query patterns that could signal an attack. Isolate your machine learning environment using virtual machines or containers to reduce risks from third-party code, and always double-check the security of any third-party libraries or dependencies you’re using.

If this all sounds overwhelming, you’re not alone. For expert advice on building secure AI systems and staying compliant with GDPR and other regulations, check out Metamindz (https://metamindz.co.uk). They can help you navigate the complexities of AI data protection with confidence.

Adding Human Oversight

No matter how advanced AI systems get, they’re still prone to errors. That’s why human oversight is absolutely necessary, especially in high-stakes areas like recruitment, credit scoring, or law enforcement. As the ICO points out: "Individuals cannot hold these systems directly accountable for the consequences of their outcomes and behaviours." [4] In short, humans need to be accountable for what these systems do.

But here’s the tricky bit: oversight needs to be genuine and effective. Too often, people tasked with reviewing AI decisions just end up rubber-stamping them - a phenomenon called "automation bias." To avoid this, reviewers must have the power to question and overturn AI decisions. They also need to be independent of the AI development team, so they can make unbiased recommendations to senior decision-makers. These steps are crucial for ensuring human oversight isn’t just a formality.

Requiring Human Review for Critical Decisions

When the stakes are high, human involvement isn’t optional - it’s a must. This isn’t just a good practice; it’s also a legal requirement. Under the EU AI Act, high-risk systems must comply by 2nd August 2027. Non-compliance could result in hefty fines of up to €35 million or 7% of global annual turnover. [3]

To make human reviews effective, keep things practical. Give reviewers a manageable workload so they can properly assess each case. Use standardised checklists to guide the review process, and keep detailed logs of any decisions to override the AI, including the reasons why. [18] Also, make sure there’s a clear human point of contact for anyone who wants to challenge an AI-assisted decision. [4] A clever way to test the system is through "mystery shopping" - submit misleading or edge-case data to see if reviewers are genuinely scrutinising AI outputs or just trusting them blindly. [18]

Setting Confidence Thresholds

Not every decision needs human input. That’s where confidence thresholds come in. These are pre-set performance levels that trigger a human review whenever the AI’s confidence in its output drops below a certain point. For instance, if your model isn’t "confident" enough in its decision, it can flag the case for manual intervention. [18]

To make this work smoothly, you can automate the process. Use "threshold gating" in your CI/CD pipelines to automatically flag low-confidence outputs for human review. [5] For critical systems, consider adding a "stop button" that allows a human to interrupt or override the AI in real time. [3] It’s also wise to have fallback processes, like hybrid models or manual workflows, ready to step in if the AI doesn’t meet its confidence thresholds. [18] Regularly update these thresholds using post-market monitoring data to keep them relevant as your system evolves. [3]

By embedding strong oversight measures, you’ll not only boost transparency but also ensure ethical accountability throughout your AI’s lifecycle.

If you’re finding it challenging to set up these oversight mechanisms or need help navigating compliance requirements, Metamindz (https://metamindz.co.uk) offers hands-on support from experienced CTOs who know the ins and outs of AI deployment and regulation.

Monitoring and Improving AI Systems

Once you've deployed your AI system, the real work begins. This is where continuous oversight comes into play. Even with strong ethical frameworks and transparency measures in place, you need to keep a close eye on your model to ensure it stays accurate, fair, and compliant. Why? Because the real world doesn't behave like a controlled test environment. Data evolves, user demographics shift, and if you're not paying attention, your AI could slowly veer into unfair or unreliable territory.

Consider this: in 2024, 78% of organisations reported using AI in at least one business function - a big leap from 55% just the year before[5]. That’s a lot of systems that need careful watching.

"Ongoing evaluation is necessary to maintain responsible AI practices throughout an AI system's lifecycle." - Lumenalta [6]

Creating Post-Market Monitoring Plans

A solid monitoring plan is your first line of defence. This isn't just about testing in the lab; it's about tracking how your AI behaves out in the wild. Start by setting up systems to collect and analyse live performance data. Regularly check for accuracy, fairness, and any unexpected biases that could creep in as your AI interacts with new and evolving datasets[6][5].

One key thing to watch for is concept drift. This happens when the data your model relies on - or the target outcomes - start to shift. If left unchecked, this can seriously impact your AI's reliability[9]. Keep a log of incidents and "near-misses" - these are moments when something went wrong or nearly did, even if no harm was caused. Analysing these can help you spot trends and address risks before they become full-blown problems[3].

It’s also important to run regular bias audits. Sometimes, discriminatory patterns can emerge after deployment due to changes in user behaviour or demographics. This so-called "fairness regression" can be tricky to catch, but it's crucial to nip it in the bud[3][5].

And don’t treat your compliance documents like static files. For example, Data Protection Impact Assessments (DPIAs) should be living, breathing documents. Update them whenever there’s a change in how your AI processes data or when new risks emerge[9]. Remember, failing to comply with regulations like the EU AI Act or GDPR can result in hefty fines, so staying on top of this is non-negotiable[3].

When you spot an issue, act fast. Delaying only makes things worse.

Fixing Issues and Updating Systems

Once monitoring flags a problem - whether it's bias, a security gap, or declining performance - you need to jump into action. This is where having a clear incident response plan makes all the difference[6][5]. For example, if your AI’s purpose changes or it starts generating new types of data, update your privacy notices and inform users within a month[9].

In high-stakes areas like healthcare or finance, human oversight is essential. You need people who can interpret the AI’s outputs and step in when something doesn’t look right[5].

It’s also smart to schedule deep-dive audits every six months to ensure your system stays aligned with new regulations and ethical standards. Automate fairness and robustness checks in your CI/CD pipelines so you can catch issues before they ever reach production[5]. And don’t forget to train your team. They should know how to spot ethical risks and understand the data protection laws that apply to your AI outputs[14][9].

Feeling overwhelmed? If setting up a monitoring plan or navigating compliance feels like too much, companies like Metamindz (https://metamindz.co.uk) offer hands-on, CTO-led support to help you keep your AI systems running smoothly and responsibly.

Getting Stakeholder Input

Your AI system doesn’t operate in a bubble - it affects a range of people: users, employees, partners, and even entire communities. To navigate the risks and responsibilities that come with AI, you need input from all these groups. Relying solely on your technical team to spot every ethical issue or compliance risk just won’t cut it. Instead, bring in expert technical leadership from senior management, Data Protection Officers (DPOs), ethicists, legal experts, and - most importantly - the people whose data you’re using. Each group offers a different perspective that can help shape a smarter, safer AI strategy and avoid costly missteps.

"You cannot delegate these issues to data scientists or engineering teams. Your senior management, including DPOs, are also accountable for understanding and addressing them appropriately and promptly." - Information Commissioner's Office (ICO) [9]

This process ties directly to the need for ongoing monitoring and refinement, ensuring your AI evolves responsibly.

Identifying Stakeholders and Their Needs

Start by mapping out all the stakeholders your AI system touches. Internal stakeholders, such as developers, project managers, and executives, can provide insight into operational and technical hurdles. Meanwhile, external stakeholders - like end-users, regulators, and community representatives - offer a broader view of how the AI impacts their lives and whether it aligns with societal norms and expectations[20][21].

When conducting a Data Protection Impact Assessment (DPIA), make sure to document these perspectives, especially from individuals whose data you’re processing or their representatives[9]. Listening to those directly affected allows you to identify issues that technical audits might overlook, such as "representational harms" (e.g., stereotyping) and "allocative harms" (e.g., unfair distribution of resources or opportunities)[9].

Stakeholder input isn’t a one-and-done deal. Your DPIA should be a living document that evolves as demographics shift or user behaviour changes - what’s often referred to as "concept drift"[9][3]. To keep things user-friendly, assign a human point of contact for questions or disputes about AI-driven decisions[4]. This ongoing feedback loop ensures your AI system stays relevant and responsive to its real-world impact.

Working with Experts

Once you’ve gathered input from internal stakeholders, it’s time to bring in external experts to refine your AI strategy even further. Assemble a multidisciplinary team that includes ethicists, data scientists, legal professionals, and policymakers. Regular evaluations and fairness audits with this group can help you uncover context-specific risks, stay compliant with regulations like UK GDPR, and minimise unintentional bias[6][9].

Use a mix of quantitative and qualitative methods to collect feedback. Surveys and questionnaires are great for gathering input from large groups and tracking trends over time. For deeper insights, consider interviews and focus groups to uncover nuanced concerns and expectations[19][21].

Tailor your communication to your audience. Provide technical details for specialists, but also create clear, high-level summaries for the general public[14][9]. Once you’ve analysed the feedback, share follow-up reports to show how stakeholder insights have influenced your decisions. This transparency builds trust and encourages people to stay engaged[20][21].

"Responsible AI prioritises ethical safeguards that reduce bias, improve transparency, and maintain compliance with developing regulations." - Lumenalta [6]

Training Teams on Responsible AI

Ethical AI practices don't stand a chance without skilled teams to back them up. Starting 2nd February 2025, the EU AI Act will require all AI providers and deployers to ensure their teams are AI-literate. The penalties for non-compliance are steep - up to €35 million or 7% of global annual turnover [3]. This makes it clear: having knowledgeable teams isn't just a "nice-to-have"; it's essential for ongoing oversight and staying compliant. But training isn't a one-off thing. It needs to keep up with changing regulations, systems, and risks. The trick? Focus on practical, role-specific education that fits into everyday workflows - no tick-box exercises here.

"Your senior management, including DPOs, are also accountable for understanding and addressing [AI risks] appropriately and promptly... in addition to their own upskilling, your senior management will need diverse, well-resourced teams to support them." - Information Commissioner's Office (ICO) [9]

Teaching Ethical Best Practices

To meet regulatory requirements and go beyond them, your team needs solid, practical ethical AI training. Start with the basics: fairness, transparency, privacy, accountability, and reliability [5]. But don't stop at theory. Teams need to recognise issues like "allocative harms" (e.g., biased hiring algorithms that deny opportunities unfairly) and "representational harms" (e.g., models that reinforce stereotypes or demean certain groups) [9].

Get hands-on by teaching your team to use tools like model cards and datasheets. These help them understand the limits and potential failure points of the AI systems they're working with. Here's how you can tailor training to different roles:

- Technical staff: Teach them to detect bias, use privacy-preserving methods like differential privacy or federated learning, and build automated fairness checks into CI/CD pipelines [5].

- Human oversight teams: Focus on interpreting AI outputs and knowing when to step in and hit the "stop button" if something seems off [3].

- Senior management and DPOs: Ensure they’re equipped to take responsibility, rather than leaving everything to the tech teams [9].

Run practical workshops using common AI tools to embed ethical practices into their workflows. For example, incorporate compliance checks into sprint planning or user stories so ethics becomes part of the development process, not an afterthought. Also, set up a feedback system where employees can safely report concerns or biases. This fosters a culture where responsible AI isn't just a policy - it’s how things are done [22].

Running Regular Security and Privacy Audits

Training is only as good as the checks and balances you put in place. Regular audits help ensure that your team's day-to-day practices align with your policies. Keep your DPIAs (Data Protection Impact Assessments) up to date, adjusting them whenever your AI processes change [9]. Plan quarterly audits to check compliance with evolving standards like the EU AI Act [3][5]. Automate CI/CD gates to block non-compliant models from making it into production [5].

For systems with higher risks, consider red-teaming - a method where you deliberately test your models to find vulnerabilities, such as susceptibility to manipulation or security flaws [3]. Regularly review tools like model cards and datasheets to keep track of limitations and biases [22][3]. Make sure "human-in-the-loop" processes are meaningful - reviewers should have the authority to override AI decisions, especially in critical areas like hiring or credit scoring [9][3].

To keep everyone accountable, tie responsible AI practices to performance reviews. Create a cross-functional AI ethics board with members from legal, security, and engineering teams. This board can review policies and handle high-stakes cases [5]. The point isn’t to slow your team down - it’s about catching problems early before they turn into expensive fines or PR nightmares. Responsible AI is all about being proactive, not reactive.

Conclusion

Transparency and ethics are the bedrock of trustworthy AI. As GOV.UK puts it: "Transparency is foundational to all other ethical principles. Being transparent allows for scrutiny of actions, decisions and processes" [1]. Without transparency, AI systems risk losing user trust, falling foul of regulators, and failing audits - none of which are small issues. The penalties are serious: under the EU AI Act, fines can hit €35 million or 7% of global annual turnover, while GDPR violations carry penalties of up to €20 million or 4% of global annual turnover [3]. That’s a hefty price tag for getting it wrong.

This checklist bridges the gap between ethical ideals and practical steps. It covers everything from setting clear principles and documenting models to actively detecting bias and keeping human oversight in place. Why does this matter? Because over 60% of AI developers apparently think about compliance only after development [7]. That’s a risky and often expensive mistake. Building compliance into your process from the start - what’s often called "compliance-by-design" - can save not just money but also time and your reputation [7].

But let’s be clear: AI compliance isn’t a one-and-done deal. It’s a continuous process. You’ll need quarterly reviews, annual audits, constant monitoring for model drift, and regular team training [2]. Done right, this isn’t just about ticking boxes - it’s a way to foster innovation. Businesses that embrace ethical practices early often find themselves ahead of the curve, building trust and avoiding the costly rework that new regulations can bring [3][7].

To make this manageable, start with your high-risk systems - think hiring algorithms, credit scoring models, or tools used in medical diagnosis. Focus your resources there first, then expand outward [2]. Keep your documentation up to date, integrate fairness checks into your CI/CD pipelines, and make sure your team understands not just what they’re doing but why responsible AI matters [5].

At Metamindz, we believe transparency and ethics aren’t just buzzwords - they’re key to thriving in a constantly evolving regulatory environment. By treating them as core business priorities, organisations can turn compliance into a long-term advantage.

FAQs

Is my AI classed as “high-risk” under the EU AI Act?

The EU AI Act categorises certain AI systems as "high-risk" based on their purpose, the sector they operate in, and their functionalities. This classification isn't arbitrary - it’s about identifying systems that could have a major impact on health, safety, or fundamental rights.

To figure out if your AI system falls into this category, you’ll need to evaluate whether it operates in areas like critical infrastructure, education, or biometric identification. These are just a few examples, but the regulation provides detailed guidelines to help you assess your system’s risk level.

If your AI system is classified as high-risk, be prepared to follow stricter rules. These include enhanced requirements for safety measures and transparency, ensuring your system operates responsibly and with accountability.

What qualifies as a valid explanation under UK GDPR for AI decisions?

Under the UK GDPR, any explanation about how an AI system processes personal data needs to be clear, straightforward, and easy to understand. It should spell out exactly how personal data is handled, why it's being processed, and the reasoning behind the system’s decisions. Avoid using overly technical terms or complex language - stick to plain, accessible wording so that anyone, regardless of their technical knowledge, can grasp the explanation. Transparency is key here.

How often should we run bias audits and model-drift checks?

Regular checks for bias and model drift are essential to keep things running smoothly. A good rule of thumb? Tie these audits to system updates or, at the very least, conduct them once a year. On top of that, keep an eye on key metrics like accuracy and bias. This kind of ongoing monitoring helps maintain compliance and ensures your system stays reliable over time.